JavaScript SEO, JavaScript AEO, Agentic AI, AI Crawlers, Why We Re-Imagined EZY Connect, and why we built the EZY.ai WordPress and Shopify Server-Side Plugins.

At EZY.ai we originally built two ways for customers to integrate our platform.

The first is our WordPress plugin. It writes schema, metadata, FAQs, Blogs, AEO-route files (Robots, LLMs, LLMs-full etc.) and structured facts directly into the page HTML: Server-Side.

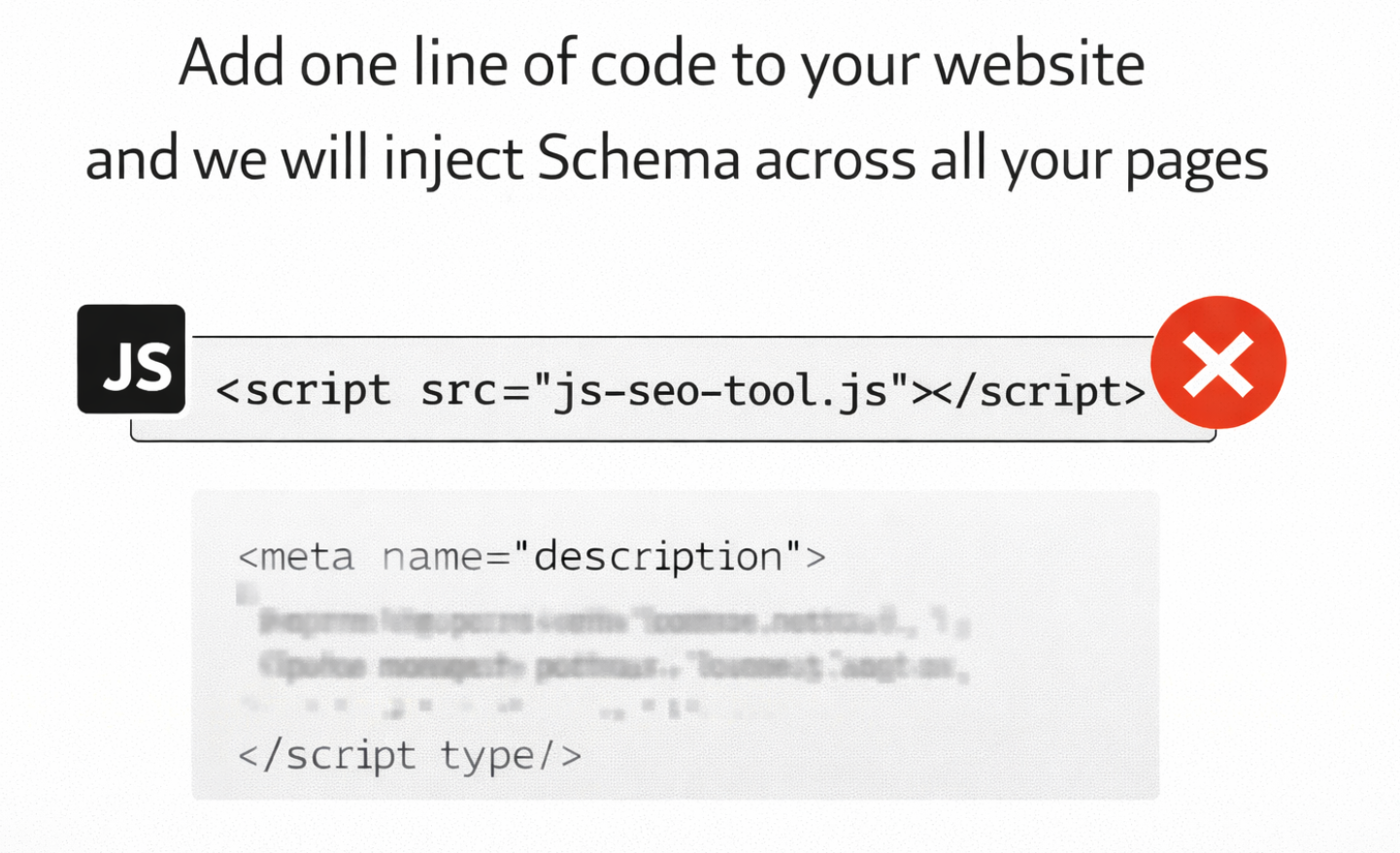

The second was something we called EZY Connect. A single JavaScript snippet that customers could drop into any site. That script allowed us to track AI agent visits, inject schema, and update meta descriptions dynamically.

Many leading AEO platforms rely primarily on something similar- a single JavaScript snippet being added to your website.

At first glance, EZY Connect, and other JS code-snippets look ideal. One line of code. No CMS dependency. Fast deployment.

But once you start looking seriously at AEO (Answer Engine Optimization) rather than just classic SEO, the tradeoffs became impossible to ignore.

This post explains why.

The Core Problem: Client-Side Injection vs Real HTML

JavaScript code snippets works by injecting metadata and schema after the page loads in the browser. That means:

- Meta descriptions are not in the original HTML response

- Schema markup is added via JavaScript

- The DOM looks correct to a human browser

- The raw HTML does not contain the data

This distinction matters far more in 2025 than it did in 2015.

How Google Handles JavaScript Today

Googlebot has changed.

It uses an evergreen Chromium renderer and can execute JavaScript extremely well. In most cases, Google will:

- Crawl the raw HTML

- Queue the page for rendering

- Execute JavaScript

- Index the rendered DOM

For Google SEO alone, this means client-side injected schema and metadata can work. And in many cases, it does.

Google even officially states that JSON-LD schema injected via JavaScript is supported.

However, there are important caveats:

- Rendering is not guaranteed on first crawl

- Rendering can be deferred

- Rendering can be skipped on heavy or slow pages

- Meta descriptions injected late are often ignored

- Canonicals, robots tags, and head mutations are brittle

Google engineers have repeatedly said the same thing in different ways: critical metadata should be in the server-rendered HTML whenever possible.

JavaScript SEO works, but it is not deterministic.

Google Is Not the Only Consumer of the Web Anymore

This is where AEO changes everything.

Large Language Models do not crawl the web the way Google does.

Most AI crawlers:

- Do not execute JavaScript

- Do not render the DOM

- Do not wait for async scripts

- Only read the raw HTML response

This includes:

- GPTBot (OpenAI)

- ClaudeBot (Anthropic)

- Perplexity crawlers

- Most training and retrieval pipelines

If schema or metadata is injected client-side, these systems never see it.

To an LLM, the page simply does not contain that data. So if you are using an AEO platform that injects Schema, Meta data and other content into your website- it's most likely a completely pointless exercise for appearing in ChatGPT results.

What About Gemini?

Gemini is the exception that proves the rule.

Google's AI systems can leverage Google's search index, which already includes rendered content. If Googlebot indexed your JavaScript-injected schema, Gemini can theoretically use it.

But this only applies to Google.

ChatGPT, Claude, Perplexity, and most answer engines do not share that advantage.

Assuming "Google can see it, therefore AI can see it" is now a dangerous assumption.

Why Meta Descriptions Still Matter for AI

Meta descriptions do not drastically change rankings. Everyone knows that.

But AI systems do not rank pages the same way search engines do.

When ChatGPT or similar tools browse the web, they usually:

- Query a search engine

- Receive titles, URLs, Schema and snippets

- Choose which pages to read

That snippet is often the meta description.

If your meta description is missing, auto-generated, or injected too late, your page is less likely to be selected as a source.

In an answer-driven world, selection matters more than ranking.

Structured Data Is Becoming AI Infrastructure

Schema is no longer just about rich snippets.

It is how machines understand:

- Who you are

- What you sell

- What your content answers

- Whether you are authoritative

- Whether your facts are structured or ambiguous

Even if an LLM does not parse JSON-LD directly, search engines do. And AI systems increasingly rely on those structured interpretations.

Schema that never makes it into the HTML is schema that may never exist as far as AI is concerned.

Handling Schema for AEO

AI engines rely heavily on Schema.org vocabulary to understand entities. That's why EZY injects:

- JSON-LD: Placed in the <head> or top of <body>.

- Key Types: Article, Product, FAQPage, Organization.

- Entity Linking: Use sameAs to link your content to authoritative sources (Wikidata, Crunchbase) to help the LLM disambiguate your brand.

Why One-Line JavaScript SEO Tools Are Risky for AEO

Some platforms rely entirely on a single JavaScript include to modify SEO data.

That approach is fine if your only goal is Google rankings.

It is not fine if your goal is:

- Being cited by AI

- Being understood by LLMs

- Being future-proof as answer engines replace search

If the data is not in the HTML, it does not exist to most AI systems.

Energy and Sustainability

There is a growing focus on the energy cost of AI.

The Cost of JS: Executing JavaScript consumes significant electricity (CPU cycles) on the crawler's servers.

The Static Advantage: Parsing static HTML consumes a fraction of the energy.

Future Policy: It is plausible that future "Eco-Friendly" crawling standards or cost-optimization protocols will explicitly penalize or ignore sites requiring heavy client-side execution for basic content discovery.

The "Text-Fetcher" Architecture

Multiple independent analyses and server log audits confirm that OpenAI's crawlers do NOT execute JavaScript.

Evidence: When OAI-SearchBot visits a page, it performs a simple GET request. It does not download associated .js files, nor does it wait for DOM manipulation.

Implication for JS Injection: If you use a JavaScript snippet to inject your meta description or schema.org data, ChatGPT is 100% blind to it. It sees only the raw HTML code. If that code contains an empty title tag or missing schema, ChatGPT assigns zero SEO value to those elements.

Why OpenAI Skips Rendering

The decision is economic. Headless browser rendering is estimated to be 10x to 100x more expensive than raw HTML extraction.

This Is Why We Pivoted Hard Toward Server-Side Output

We now only use EZY connect to rack AI Agent visits to your website. JavaScript snippets are not aligned with where the web is going.

Answer engines require:

- Deterministic data

- Zero ambiguity

- No JavaScript assumptions

- No DOM mutation dependencies

If a Large Action Model is going to book, buy, reserve, or transact on behalf of a human, it cannot guess.

That future demands static, verifiable, machine-readable facts.

And that is why we have built bespoke plugins for the worlds largest CMS platforms, that you can connect to directly from EZY.ai

The Direction We Believe the Web Is Moving

Five years from now:

- AI agents will not parse megabytes of JavaScript

- Edge AI will prefer clean HTML

- Structured facts will matter more than keywords

- Websites will act as machine translators, not brochures

This is why EZY.ai now prioritizes:

- WordPress plugin output

- Shopify plugin output

- (More Plugins coming this year)

- Server-side schema

- Deterministic metadata

- Explicit factual structure

- AI-first crawling assumptions

JavaScript still has a role.

But it cannot be the foundation.

The Practical Takeaway

If your SEO or AEO depends entirely on JavaScript injection:

- Google might see it

- AI probably will not

- ChatGPT will not see it

- The future definitely should not rely on it

If you want AI systems to understand you, trust you, and transact with you, the data must exist before the browser ever runs a script.

To future-proof architecture visit EZY.ai and test the one of our CMS Plugins for free, and update your Robots or LLMs file from our Dashboard. Upgrade to EZY Pro for the full feature set, which is constantly growing,